AI, Literacy, and the Evidence Gap: Key Takeaways From Boston University on Amira Learning

A cognitive neuroscientist explains why rigorous evaluation—not hype—should guide how AI shows up in reading instruction.

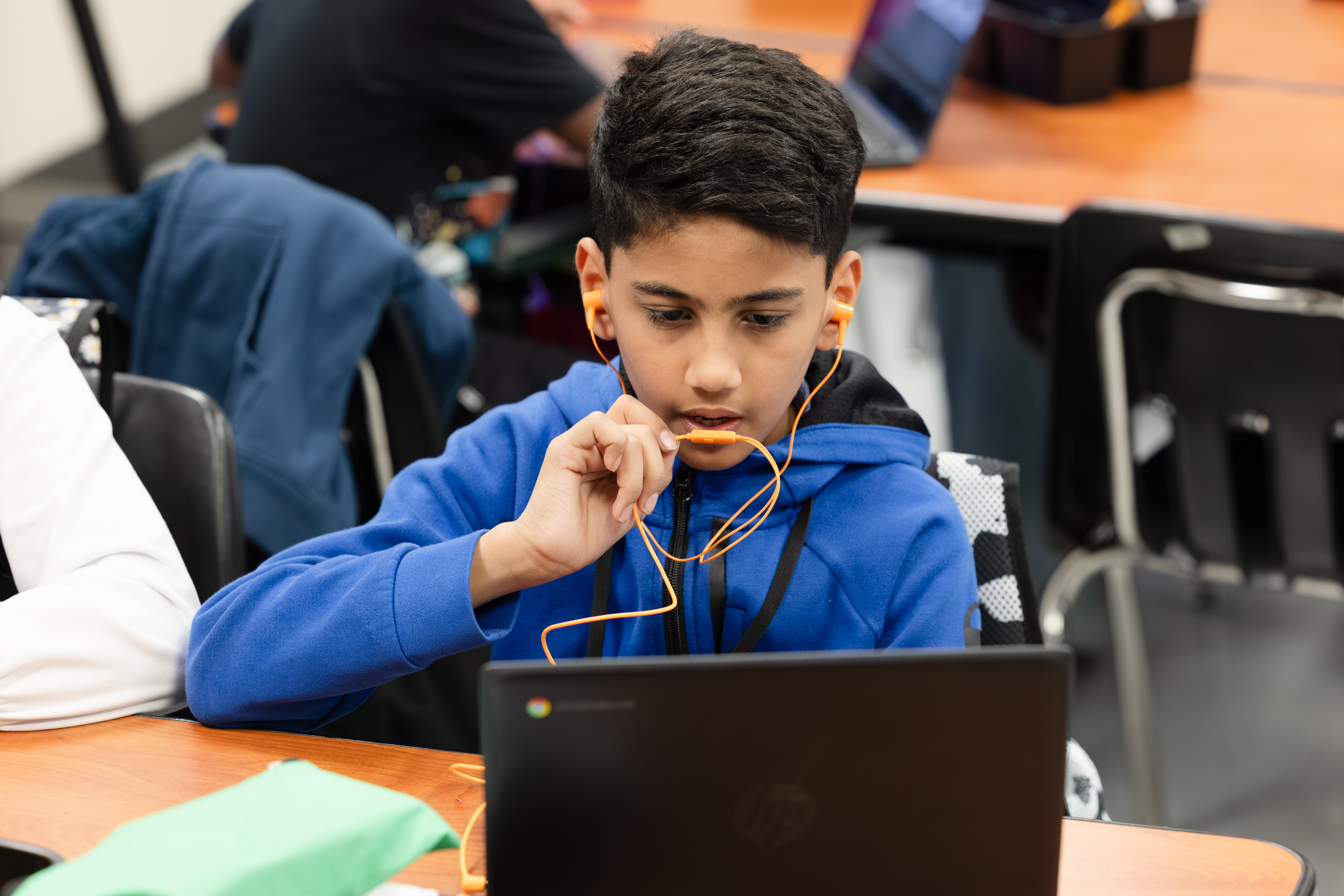

American schools are facing a literacy crisis and the urgency is real, especially for students who have historically been underserved. At the same time, artificial intelligence is entering classrooms fast. The question isn’t whether AI will show up in literacy instruction. It already is. The question is whether AI will strengthen evidence-based teaching or accelerate adoption of AI that sounds promising but hasn't been proven to improve learning.

In a recent essay, cognitive neuroscientist Ola Ozernov-Palchik (Boston University) lays out both the promise and the risk of AI in education. Her message is clear: innovation can’t outrun evidence. If AI is going to reshape literacy instruction, districts and developers alike need stronger standards for validation, transparency, and independent evaluation.

Boston University’s evaluation collaborative has selected Amira Learning through its inaugural edtech evaluation challenge, launching an independent research effort designed to build credible evidence about what works, for whom, and under what conditions. Amira's technology traces back more than two decades to Carnegie Mellon University's Project LISTEN, and has accumulated a growing body of independent efficacy research. The BU collaboration reflects Amira's commitment to going further: inviting rigorous, university-led evaluation to strengthen the evidence base even more.

In a market where AI is everywhere, this kind of transparent, rigorous evaluation helps districts separate momentum from meaningful impact.

Read the full BU announcement: https://www.bu.edu/hic/2026/03/03/boston-universitys-eval-collaborative-names-amira-learning-winner-of-inaugural-eval-edtech-evaluation-challenge-advancing-evidence-based-literacy-reform-in-massachusetts/

Read the full article by Ola Ozernov-Palchik (paywalled): https://www.bostonglobe.com/2026/03/12/opinion/literacy-ai-say-more-podcast/

Read more from the AI & The Reading Brain Blog

.avif)